Engineering AI That Matters — Navan Engineering Manager Raju Dandigam

Modern product development demands speed, but speed without structure can lead to chaos. How do you balance rapid iteration with maintaining a stable system?

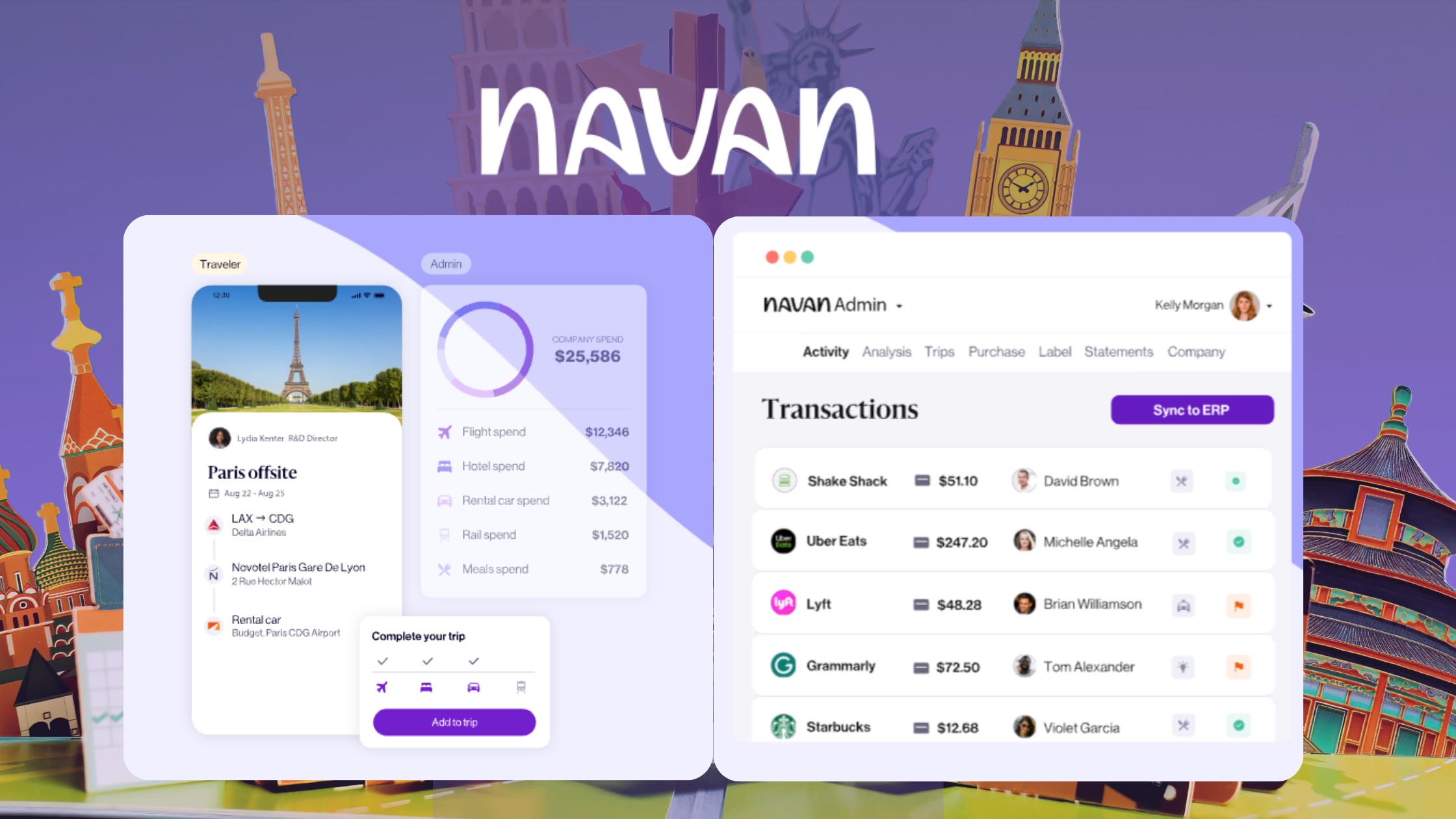

This week's VentureFuel Visionary is Raju Dandigam, Engineering Manager at Navan. It is an all-in-one travel, corporate card, and expense management platform built for modern enterprises that shares what it takes to build high-impact products that scale. He has 14+ years of experience spanning front-end engineering, full-stack development, DevOps, and team leadership.

In this episode, Raju brings a pragmatic perspective on modern engineering execution, from leading the development of Navan's rewards and personal travel experiences to implementing automation that dramatically reduces production bugs. His core takeaway: focus on solving meaningful problems, build what's core to your advantage, and partner for everything else in a world moving faster than ever.

%20Engineering%20AI%20That%20Matters.jpeg?width=760&height=428&name=Thumbnail%20(Podcast)%20Engineering%20AI%20That%20Matters.jpeg)

Episode Highlights

- Building Sustainable Speed in Engineering – Raju emphasizes that shipping fast isn’t just about moving quickly; it’s about creating processes, architecture, and quality pipelines that let teams sustain velocity without breaking systems or creating rework.

- Start Small, Scale Confidently – He outlines a phased approach for rolling out new features, including AI: start with a small cohort, validate impact, then expand, ensuring user trust and system stability.

- Analytics-Driven Product Decisions – He also explains that meaningful metrics, like adoption, completion, or behavior change, drive decisions and roadmap planning, while vanity metrics that don’t influence action are avoided.

- Integrating AI With Purpose – The discussion highlights how AI must solve real user pain points to be valuable, rather than being added for novelty. Success comes from building features that reduce effort, improve experience, and maintain trust.

- Operational Disciplines of High-Performing Teams – Raju breaks down the traits of teams that ship at scale: clear processes from idea to release, built-in quality, alignment across dependencies, and regular retrospectives to continuously improve execution.

Click here to read the episode transcript

Fred Schonenberg

Hello everyone. And welcome to the VentureFuel Visionaries podcast. I am your host, Fred Schonenberg. Today, I'm joined by Raju Dandigam. Raju is an engineering leader working at the intersection of modern front-end architecture, AI powered product development and cloud native delivery. He is an engineering manager at Navon, where he helps lead teams building and scaling high impact product experiences for real enterprise workflows.

He's over 14 years on front-end and full stack engineering, DevOps and people leadership and he brings a practical lens to what it takes to ship fast without sacrificing quality. So today, we're going to unpack how teams scale front-end systems, how engineering leaders use analytics to drive better product decisions and what it really takes to build AI features that feel genuinely useful and scale. Raju, it is so nice to have you on the show. Welcome.

Raju Dandigam

Thank you. Thank you. Yeah. Nice to be here.

Fred Schonenberg

So, you lead engineering teams, building high impact enterprise workflows in Navon. I'm curious from your perspective, how does modern engineering execution translate into real actual business advantage?

Raju Dandigam

Yeah, sure. So, for me, modern engineering execution creates a business advantage when engineering helps the company move faster with confidence. So, I mean, it's not more about shipping the features, but it is more like, uh, setting up an environment where the team can move faster with the confidence that what it means is basically having a strong clear alignment early in the phase.

That means the product design quality, uh, the engineering, and the teams have alignment on what the problem we're going to solve is, what the scope of it is, what the technical risks are, what the rollout plan is, and what success looks like at the end. So if you have that clarity in the beginning, then teams will avoid rework and move much more effectively. And now it is like AI is becoming part of the core execution area.

But at the same time, how are we going to effectively use it and have enough guardrails is a key part. So now the AI is there across the planning, coding, and testing, like each and every phase of the development cycle. So having those review points, permissions, and the guardrails across with the AI, I mean, effectively using AI, and then the broader, and then the broader plan on the overall usage that even helps the team to execute much faster. And overall, the modern execution becomes advantageous only when the teams have clarity, confidence, and the infrastructure in place to move faster.

Fred Schonenberg

I love it. And maybe for anyone listening that doesn't know what Navon does, can you give a little background on the company and the products and consumers you work with your customers?

Raju Dandigam

Yeah, sure. So Navon is one of the leading travel and expense platforms. So they have customers like Unilever, Netflix, and there are more, like 10,000+ customers. The customers are across enterprise and other scales. And then the products we build are basically on the travel side and the expense side, which helps to manage their travel.

And then obviously the expense is also included as part of the solution. So the major part I am part of is on the travel side of it. So we allow the users or the customers to make their flight bookings, hotel, rail, car, whatever they need. Everything is allowed on that platform. Then I personally work on the rewards and personal travel. Currently, we are working on Navon Edge, which is basically an AI chat-based assistant to make your bookings, which was recently launched. So that’s on a high level. It’s been a big day for us. We are now in an IPO, like a public company. So yeah.

Fred Schonenberg

Yeah, it’s amazing. And all of that has to be simple and useful for those enterprise customers, because there’s a variety of ways they could do that. And you want to make sure that it is seamless. The user experience is easy, and that you’re innovating to make it faster, easier to find the right flights, the right price, the right time, and make sure that all gets organized back to those enterprise customers to make all their travel rules and expense ramifications be in line.

Raju Dandigam

Got it. Yeah. That's true.

Fred Schonenberg

One of the things we hear often is this idea about shipping fast. I think one of the interesting things now that you’re such a large company is how you move quickly but sustain that velocity once you’re in a scaled product organization. You can’t just, as a startup, ship things out and regroup afterwards. You need to make sure there’s some control and that it’s going to work.

Raju Dandigam

That is correct. Yeah, that’s absolutely right. Shipping fast sounds misleading, but the challenge is that any team can move fast for a short period of time. The real challenge comes in being consistent—shipping fast without breaking the system or creating cleanup work later, right?

So the challenge is more about building a consistent environment where we can move faster, but at the same time maintain quality and user trust. That again comes back to having alignment in the process, but also building quality directly into the process.

That is one of the key ways we can actually ship faster in a scaled organization. This involves having a test plan, a rollout plan, monitoring alerts, or whatever is needed to understand what has been shipped and how it is performing. Are there any other challenges or ongoing issues that we can detect even before the customer reports them?

All of that quality pipeline has to be built into the system so the team can sustain velocity while thinking through all the use cases—rollout strategies, whether to release everything at once, a smaller feature, or a phased rollout. These processes help move faster, safely.

Another key point is the architecture. Do we have an architecture that supports change? System complexity becomes the biggest enemy of speed. We should have modular systems, well-designed APIs, and backward compatibility so that if the team needs to move back and forth or change release versions, it’s simple and easy to do. And then, obviously, AI is helping throughout these processes and in the shipping-fast approach. It can be effectively used to maintain that sustainable velocity.

Fred Schonenberg

Yeah. Sorry to interrupt you, I was thinking as you were speaking about how AI might help with some of the complexity. At the same time, a big challenge is making sure the data is clean and accurate. The architecture also needs to make sense to the AI, because if you ask it to act, it can misinterpret the complexity. Sometimes it can obviously help clarify things.

It is accurate. The architecture makes sense to the AI to a degree, right? Because if you ask it to go, it can misinterpret the complexity. Sometimes it can help, obviously, with clarity of that. But I'm curious how you're balancing that need to, when you go to launch something new, go, oh, that's going to impact these 10 things over here, but it's worth it at this moment to fix that because we need to build this foundation as we start to work more with AI moving forward from a modular standpoint.

Raju Dandigam

So yeah, when we have to build something new, at the same time, making sure we are not breaking the system or the existing things. The smaller, efficient way is to make sure we are basically extending it out as a V2 or maybe a version, but we still have control to release it to a smaller group or experiment. Then we make sure it goes well, and if there is an impact, only a very few cohort users have been using this new thing we released.

We make sure there is no impact on the existing functionality or existing features. Once we build that confidence in maybe a few days or a week, we can open it up to the broader group. So I think the way it majorly helps is if you want to build something drastically new or maybe use AI. The ideal or efficient way is to start small, and then experiment, and then make it broader.

Fred Schonenberg

Yeah. I love the “start small” approach. It can alleviate some of that short-term delivery pressure. You can learn rapidly and quickly from that, and then think of the larger, longer-term rollout once you’ve debugged it a little bit. One of the things I know you’ve written about and talked about is analytics-driven engineering. I’m curious if there are metrics you would advise product teams to pay attention to versus vanity metrics, things that sound good but really don’t move the needle.

Raju Dandigam

That is true. The way it was is that meaningful decisions basically come from the metrics. When we have analytics-driven metrics, it helps basically decide what we’re going to build and how we’re going to build it. So in terms of vanity metrics, we have to start with the product and see user outcome metrics—meaning, did the feature improve adoption, is it giving conversion, or is there a completion rate or retention? Those kinds of questions help us come before even internal delivery metrics.

The real question is whether the feature changed user behavior in a useful manner or not. So if you know whether the feature release is helping a user or where the user is dropping, we can figure it out with the metrics. But at the same time, we cannot have all the metrics. The question is: does this metric change any behavior or decision? Seeing this metric, am I making any decision? If a metric isn’t helping make any decision, that’s obviously a vanity metric—there’s no point to it. Having an association of a meaningful decision to a metric means those are the metrics we focus on and include in our product.

And then obviously, we can also use the metrics to find out how the product is doing. We can release a smaller feature, see how it’s performing, look at the data, and understand whether it’s really worth spending more time on it or if it’s better to navigate or pivot to another product or feature. So yes, metrics are the key way to decide and build things in the product organization.

Fred Schonenberg

And is that data—whether it’s product or behavioral data—what’s influencing your roadmap and decisions in terms of what to build next or what to scale?

Raju Dandigam

Yeah, absolutely. That’s generally the way most product-led organizations move forward, as far as I know. Metrics are one of the key points that help us derive the roadmap. The way it works is, we start with the pain point we’re solving—what is the data backing up that this is the problem we are going to solve, or this is the problem we are putting into our roadmap? Is there any data behind it showing that this is the pain point?

If we don’t have data, obviously, not every problem will have data, the question is: are we going to spend an entire quarter or a lot of time figuring it out? Is it really worth it? No. The way is to start with an MVP, get the data as soon as possible, and then understand if it is actually going in the direction of the initial hypothesis. If it is, move forward. The first goal is to get the data and figure out whether the hypothesis of the problem is a real pain point for the user. If it is, then put it into the roadmap. That’s how it starts. And yes, metrics or whatever the data is are key for the roadmap in any product organization.

Fred Schonenberg

So one of the things I’m most excited to talk to you about is the Navon AI Edge product. It’s because you’ve actually shipped AI features into real products that people are using. A lot of folks are still either using AI on the backend for efficiency gains or are speaking to its potential but haven’t rolled it out in a meaningful way. Can you talk a little about how you moved that product from a demo into something users actually rely on?

Raju Dandigam

Got it. Yeah. So, the biggest difference is that demo AI looks smart in demos, but the real challenge is finding the real pain point of the user. So, the way to integrate AI is a key challenge. While adding AI features to a product is easy with today’s infrastructure, the question is: does it actually solve a meaningful problem? Does the user really need AI there? Fancy demos are easy, but the hard part is finding the place where AI actually reduces user effort, improves the current experience, or saves real time. If that gap is clear, people will try it and come back. But if the gap is not real and that we are just trying to fit in the AI, then the user may not have the trust.

So again, the key part is building the, building the trust and then maintaining the trust from the user is the more important thing than showing like the AI, the intelligence of our product. Again, the time when we make that user see that, Hey, this is where we are reducing your effort of making your process better in a user flow or when you're using the product.

Then they feel that, they feel the trust, they build the trust, and then they’ll come back, and that’s when AI adds into our product. So yeah, yes. Whatever the product or whatever the AI features, it’s important to know the place where it fits in and then build on top of it. And then making it more useful to the user is what is going to help in products, like in companies or organizations, where, yeah, we need to integrate.

Fred Schonenberg

Yeah, it’s interesting. I always talk about starting with the pain point, which is something you’ve shared. And it’s a pain point that’s important to solve for users, right? There are things that might be annoying or they don’t love, but unless your solution is going to create a meaningful difference, it’s not going to be important to them. They don’t, they don’t want it, right? There’s not a pain worth solving. So I think that’s a very interesting distinction.

Raju Dandigam

That's true. Yeah, that's correct.

Fred Schonenberg

So I want to jump to high-growth product organizations like yours. Do you think there are any operational disciplines or character traits that separate the teams that consistently ship at scale versus those that are maybe stuck just in firefighting mode?

Raju Dandigam

Got it. So basically, the main difference is that strong teams build a clear operating process across the full product cycle. Firefighting teams may mostly react, but the major difference is having a disciplined process from idea to release, from product feature planning to the release part. That means how features are selected, what data supports them, how hypotheses are validated, how the work is planned, how dependencies are identified, and how execution is tracked. Teams that ship well do not start with code; they start with clarity.

They start with planning and then quality and reliability are built into the execution. So that means we define the prerequisite. What, what do we need to build this one? Like, do we need any, do we have any dependencies? Like, do we need any team to be done before? Or do we need… Do we have any infrastructure issues? Like all of that planning has to be done very early in the initial phase.

And then the actual team has to get into the technical design or whatever the architecture design, and then they get into the coding part. So once we have these things in place, then the process has been set up and then we have to repeat the same process. And then at the end, once we deliver, or maybe once we are done with the product, the other important step in the process is to know the retrospection.

Like at the end, what has been well, what has been meant well, what is good and what is not good. So if you are able to identify those three parts of it, what’s working good, what’s not working, what has to be improved, then you can improve the process. Those steps have to be there to identify process improvement, not to point at any individual or any team. Right. So the idea is to improve the process and then make that a standard while we are operating, while we are executing. So, yeah. So these are the operating disciplines, which basically set the clear process, built-in quality, and regular learning groups.

Fred Schonenberg

Raju, one thing before we get to the speed round, where I’m going to ask you a couple of sentences just to get your gut reaction on things. I’m curious — obviously, you’re a builder, that is your background. A lot of corporate strategy teams we talk to kind of think in this framework of buy, build, or partner. From your perspective, do you look outside your own four walls at startups or new technologies, and how do you decide when to bring them in, whether as a partnership or as a vendor?

Raju Dandigam

So most probably, we look at the product and see if there is a need to bring the new product inside our organization or maybe into the engineering cycle, or the development team. If we understand or realize that it’s going to help us save time, bring more clarity, reduce maintenance, or add value in some way, then we’ll look at the product, experiment with it for some time, and see what value it adds. Then we make the decision to continue using it or maybe take the contract or whatever.

So if it is going to add value, then I think we’ll look at the product, experiment with it for some time, and see what value it is adding. Then obviously we’ll make the decision to continue or maybe take the contract or whatever. Right. But then the question is more like, do we build or do we buy? I would say if there is a product available and it is already proven, buying makes sense. Because if you start building everything yourself, then we’ll get distracted from our core goal, whatever the core product, company goal, or actual goals are. So yeah, if there is something available and it makes meaningful sense to the team, buying makes sense.

Fred Schonenberg

Yeah. I love that. Okay. So are you ready for our speed round here?

Raju Dandigam

Yeah. All right.

Fred Schonenberg

We got a rapid fire. So, what are the most overhyped trends in AI product development?

Raju Dandigam

So I mean, nowadays, making fully autonomous agents without any clear guardrails or real use cases is something I see a lot. But if you know the real use case, and there are guardrails and permissions around it, that’s good. Fully autonomous agents that can do everything is something I hear is overhyped.

Fred Schonenberg

On the other side of the coin, are there underrated levers from an engineering perspective?

Raju Dandigam

From the engineering perspective, reducing everyday developer friction—like making the developer life cycle smoother—is something underrated, but that actually helps the team move faster.

Fred Schonenberg

Is there one metric that every VP of engineering should be tracking?

Raju Dandigam

I would say failure rate, uh, looking at the failure or looking at the alerts of like success versus failure in terms of any major metrics in case of like depends on the company, but they don't like success or failure rate.

Fred Schonenberg

This is always an interesting one to think about. There’s this friction in the world of speed versus quality. We talked about it a little bit before—shipping fast versus sustained product. Which do you think creates more long-term value?

Raju Dandigam

Always, the sustained quality is going to create long-term value. That automatically enables the speed, is what I feel. But yeah, quality and sustainable speed.

Fred Schonenberg

What is the biggest hidden cost in scaling AI features?

Raju Dandigam

The usage. If we don’t monitor or effectively use it, we’ll end up having higher usage or higher cost in terms of AI. But it has to be smarter, and there should basically be a smarter way to use the AI.

Fred Schonenberg

Do you see AI's advantage to be more so around its ability to personalize for each individual customer or more on the efficiency side on its ability to automate tasks across customers?

Raju Dandigam

So on the enterprise side, on the B2B side, I do see it as automation. On the consumer side, maybe personalization. But yeah, mostly it’s about automation basically creating workflows, eliminating repeated processes, and making things faster.

Fred Schonenberg

Raju, thank you so much for taking the time to share your insights. This is completely fascinating. Thank you for, uh, for doing all the work to inspire innovation.

Raju Dandigam

Thank you. Thank you so much, Fred. It was, it was great talking to you. And then thanks for bringing me here.

VentureFuel builds and accelerates innovation programs for industry leaders by helping them unlock the power of External Innovation via startup collaborations.